Our experts answer questions raised in the chat during our recent webinar Data Protection Strategy with Microsoft 365 Copilot In Mind.

There is a risk of over-classification of data as restricted for the strict purpose to avoid the data being mapped in Graph. How do we balance the use of Copilot and data classification requirements?

Over-classification of data entails assigning a higher level of security classification to information than is necessary, due to a lack of clear guidelines, or misunderstanding of the data’s sensitivity.

The goal is to protect sensitive data while still allowing for its productive use. It’s about finding the right balance between security and accessibility.

Balancing the use of Copilot and data classification requirements involves a strategic approach to ensure that data is classified accurately without unnecessarily restricting access.

Steps to consider helping achieve this balance:

- Define Clear Data Classification Policies: Establish clear guidelines for what constitutes restricted data based on regulatory requirements and business needs.

- Educate Users: Train users on the importance of data classification and the potential risks of overclassification.

- Implement Role-Based Access Control (RBAC): Ensure that users have access only to the data necessary for their role, reducing the likelihood of overclassification.

- Use Automation with Oversight: Leverage Copilot to automate data classification where possible, but also implement checks and balances where decisions are reviewed periodically.

Source:

New Microsoft Purview features use AI to help secure and govern all your data | Microsoft Security Blog.

How do we strike the right balance between leveraging the efficiency and innovation of AI apps and Copilot, while ensuring data privacy, security, and compliance with evolving regulatory requirements in various jurisdictions?

We recommend a broad spectrum of solutions here, but the first and most effective is limiting the data copilot has access to and ensuring you have good information management systems in place.

Striking the right balance between leveraging AI for efficiency and innovation and ensuring data privacy, security, and compliance involves several key considerations, such as:

- Privacy by Design: Your company should integrate privacy into the development and operation of AI systems from the outset. This means considering privacy at every stage of the AI lifecycle, from data collection to model deployment.

- Transparency: It’s crucial for your company to be transparent about how you use AI and data.

- Regulatory Compliance: You must stay updated and continue compliance with the various data protection laws and regulations, as well as developing AI regulations that might apply in the jurisdictions where you operate. This is an ongoing process, that will require continuous monitoring and control enhancement.

- Bias and Fairness: AI systems should be designed to avoid biases and ensure fairness. This involves using diverse datasets for training and regularly testing AI models for biased outcomes.

- Ethical Considerations: Beyond legal compliance, you should consider the ethical implications of AI and strive to use AI in ways that are beneficial to your employees and clients and do not harm individuals.

By addressing these areas, you can harness the power of AI and Copilot while maintaining trust and safeguarding the privacy and security of your data.

Sources:

https://iabac.org/blog/ai-and-privacy-balancing-innovation-with-data-protection

https://aiempower.org/data-privacy-in-the-ai-era-balancing-innovation-and-protection/

https://datafloq.com/read/ethical-algorithm-balancing-ai-innovation-data-privacy/

https://www.dataleon.ai/en/blog/ai-and-privacy-balancing-innovation-and-data-protection

With Copilot, how do you make users accountable if there is no access to the prompts?

Accountability in the context of using AI tools like Copilot is primarily about ensuring that users are aware of and adhere to the guidelines and ethical standards set for the use of the Copilot. It is imperative that your organisation either creates an AI policy or ensures that the use of AI is incorporated into policies and processes, so users are aware of the limitations and implications of AI usage within your organisation.

Education and Training: Educating users about the capabilities and limitations of Copilot, as well as the importance of ethical use.

Transparency: While specific prompts may not be accessible, the outcomes of interactions with Copilot can be made transparent, allowing for scrutiny and review.

Ethical AI Frameworks: Adopting ethical AI frameworks that emphasize accountability and provide a basis for evaluating user actions.

Copilot is designed to assist users with a wide range of tasks, but there are certain types of prompts that Copilot is programmed to not engage with or provide responses to.

These include:

- Personal Data: Prompts that request the sharing or processing of personal data or sensitive information.

- Illegal Activities: Prompts that involve illegal activities or encourage breaking the law.

- Harmful Content: Prompts that could lead to the creation of content that is harmful, abusive, or promotes hate speech.

- Sensitive Topics: Prompts related to influential politicians, state heads, or any group of social identities such as religion, race, politics, and gender.

-

- Copyrighted Material: Prompts that request the reproduction of copyrighted material such as books, music, and movies.

</ul

These limitations are in place to ensure ethical use, protect privacy, and comply with legal standards.

Sources

Data, Privacy, and Security for Microsoft Copilot for Microsoft 365 | Microsoft Learn

Frequently asked questions: AI, Microsoft Copilot, and Microsoft Designer – Microsoft Support

What strategies or technologies can we implement alongside DLP to better manage and control data in shadow IT environments?

A good start is information protection labels here as the protection follows the content and documents regardless of where it lands up.

There are also “perimeter” controls via DLP that can work in the client (word, outlook excel etc.)

We recommend a strategy that utilises the Microsoft Zero Trust model, in combination of Microsoft Security & Compliance solutions to better manage and control data in shadow IT environments:

Microsoft Zero Trust:

Zero Trust Model: This security architecture assumes breach and verifies each request as though it originates from an open network. Key principles include:

-

-

- Verify Explicitly: Authenticate and authorise based on all available data points, including user identity, location, device health, service or workload, data classification, and anomalies.

- Use Least-Privilege Access: Limit user access with just-in-time and just-enough access (JIT/JEA), risk-based adaptive policies, and data protection.

- Assume Breach: Minimise blast radius, segment access, verify end-to-end encryption, and use analytics for visibility and threat detection.

-

Microsoft Entra ID:

Cloud-Based Identity and Access Management: IT admins use Entra ID to control access to apps and resources based on business requirements.

App developers use Entra ID for single sign-on (SSO) and personalised experiences.

Microsoft 365, Office 365, Azure, and Dynamics CRM Online subscribers automatically use Entra ID for sign-in activities.

Microsoft Purview:

Data Security:

Microsoft Purview Information Protection: Classify and label data based on sensitivity, ensuring proper handling and access controls.

Microsoft Purview Insider Risk Management: Detect and mitigate insider threats by monitoring user behaviour and identifying anomalies.

Data Governance:

Microsoft Purview Data Catalog: Create an up-to-date map of your entire data estate, including data classification and lineage.

Microsoft Purview Data Estate Insights: Identify where sensitive data is stored in your estate.

Microsoft Purview Data Map: Generate insights about how your data is stored and used.

Microsoft Purview Data Policy: Manage access to data securely and at scale.

Microsoft Purview Data Sharing: Create a secure environment for data consumers to find valuable data.

Risk and Compliance:

Microsoft Purview Audit: Monitor and track data access and changes.

Microsoft Purview Communication Compliance: Ensure compliance with communication policies.

Microsoft Purview Compliance Manager: Minimise compliance risks and meet regulatory requirements.

Microsoft Purview Data Lifecycle Management: Manage data retention and disposal.

Microsoft Purview eDiscovery: Facilitate legal discovery processes.

Microsoft Security:

Microsoft Defender XDR (formerly Microsoft 365 Defender):

-

-

- Unrivalled threat intelligence and automated attack disruption.

- Unified extended detection and response (XDR) solution.

-

Microsoft Defender for Cloud:

-

-

- <

- Strengthen security posture across multi-cloud and hybrid environments.

- Find weak spots in cloud configurations and protect workloads from evolving threats.

-

By combining these strategies and technologies, organisations can enhance data security, governance, and compliance while effectively managing shadow IT risks. Remember that a holistic approach is essential, considering both technical solutions, people, policies and user awareness.

Would you consider Microsoft as a controller or a processor when it comes to Copilot? Or a split approach: processor for Work and controller for Web? What do you think, especially taking into consideration inferences made by Copilot?

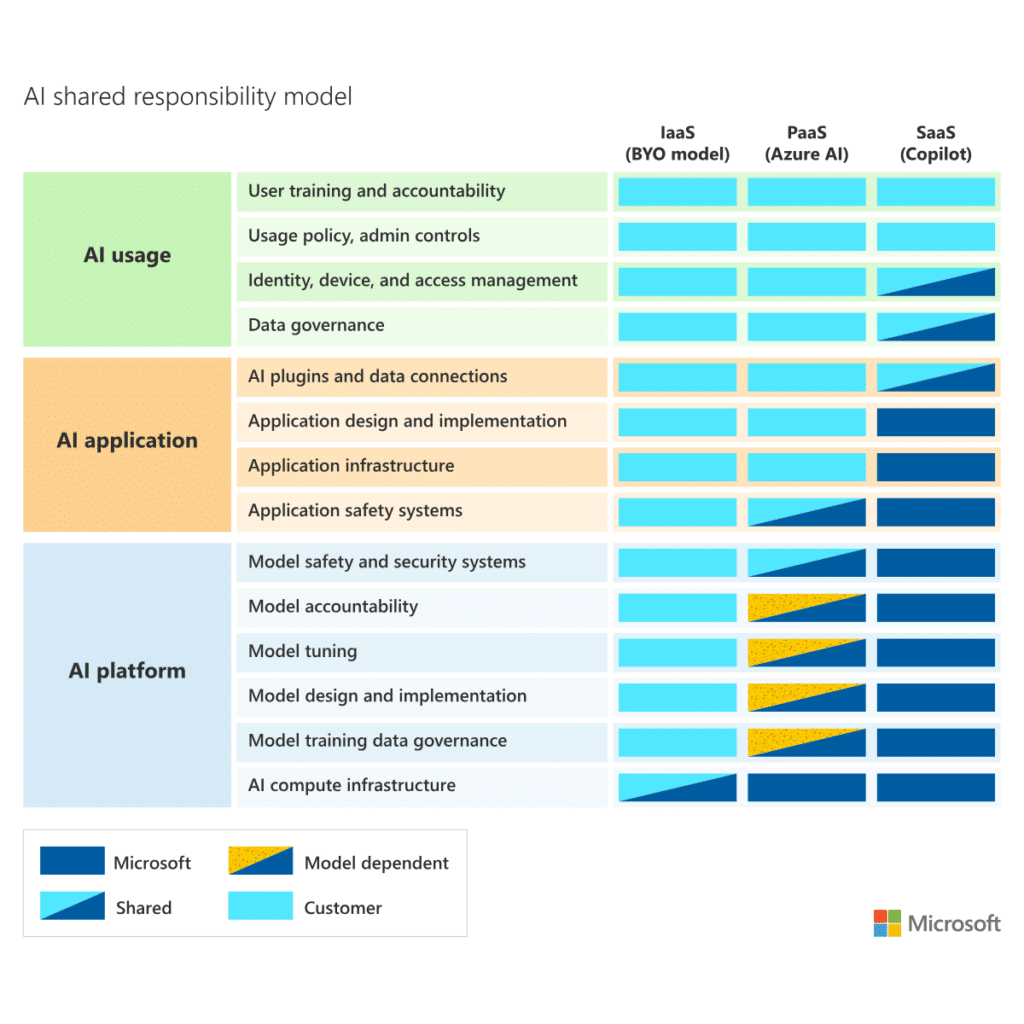

As with cloud services, you have options when implementing AI capabilities for your organization. Depending on which option you choose, you take responsibility for different parts of the necessary operations and policies needed to use AI safely.

The GDPR requires that controllers (such as organizations and developers using Microsoft’s enterprise online services) only use processors (such as Microsoft) that process personal data on the controller’s behalf and provide sufficient guarantees to meet key requirements of the GDPR. Microsoft provides these commitments to all customers of Microsoft Commerical Licensing programs in the DPA.

Customers of other generally available enterprise software licensed by Microsoft or their affiliates also enjoy the benefits of Microsoft’s GDPR commitments, to the extent the software processes personal data.

The following diagram illustrates the areas of responsibility between you and Microsoft according to the type of deployment:

Source:

https://learn.microsoft.com/en-us/azure/security/fundamentals/shared-responsibility-ai

https://learn.microsoft.com/en-us/legal/gdpr

Copilot disclaimer – NB: Generative AI was consulted in the creation of this content.